Alta Graham

Toward Robust Image Classification

Sep 19, 2019

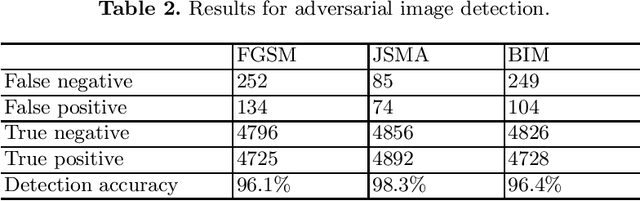

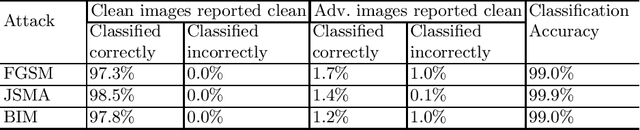

Abstract:Neural networks are frequently used for image classification, but can be vulnerable to misclassification caused by adversarial images. Attempts to make neural network image classification more robust have included variations on preprocessing (cropping, applying noise, blurring), adversarial training, and dropout randomization. In this paper, we implemented a model for adversarial detection based on a combination of two of these techniques: dropout randomization with preprocessing applied to images within a given Bayesian uncertainty. We evaluated our model on the MNIST dataset, using adversarial images generated using Fast Gradient Sign Method (FGSM), Jacobian-based Saliency Map Attack (JSMA) and Basic Iterative Method (BIM) attacks. Our model achieved an average adversarial image detection accuracy of 97%, with an average image classification accuracy, after discarding images flagged as adversarial, of 99%. Our average detection accuracy exceeded that of recent papers using similar techniques.

* 2019 Intelligent Systems Conference, pp 483-489

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge